Computer Solutions

Builds a unified, repeatable time base for critical operations to ensure data center business continuity, stability and high availability

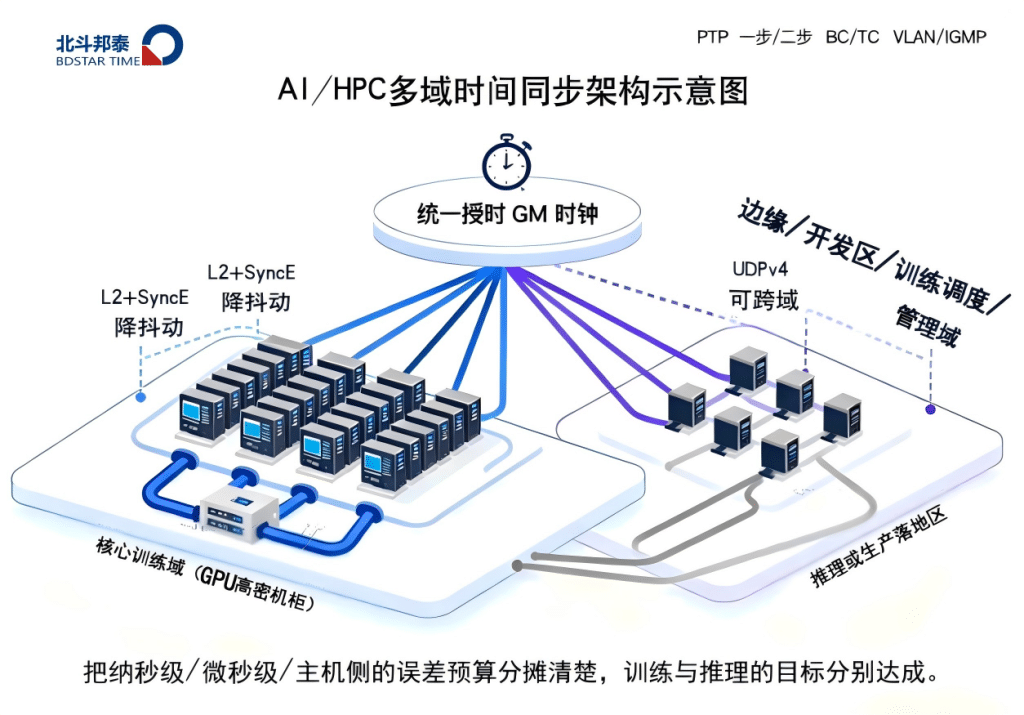

In the face of AI, HPC, edge computing and other high-performance scenarios, the scale of the arithmetic power is constantly expanding, and what really determines the stability of the system, the consistency of the order and the ability to collaborate on tasks is an often-overlooked but crucial basic ability - time.

As the cluster size jumps from tens of cards to thousands of cards, critical aspects such as GPU batch windows, synchronization barriers, event stream causal ordering, and inference task scheduling require the entire system to maintain aHarmonized and retestable timelines. If the time is inconsistent, the computing system will experience queuing chaos, window misclassification, task disorganization, audit chain disorder, and other hard-to-localize problems under high load. Therefore, rebuilding the time base for the computing industry is an unavoidable engineering foundation for the AI era.

Why rebuild the "time base"?

NTP has been widely used in computing systems for the past decade, but its application layer "request-response" model translates link jitter, queuing, and uncertainty into timing errors that can easily roll from microseconds to milliseconds. For AI/HPC, this is a disaster.

The introduction of PTP changed the way time is transmitted:

Timestamps from the "host kernel" down to theNIC / PHY / Switch (BC/TC)

Every jitter is corrected

Together with SyncE, frequency and phase can be tightened together.

As a result, microsecond accuracy is becoming the norm and nanoseconds are no longer rare

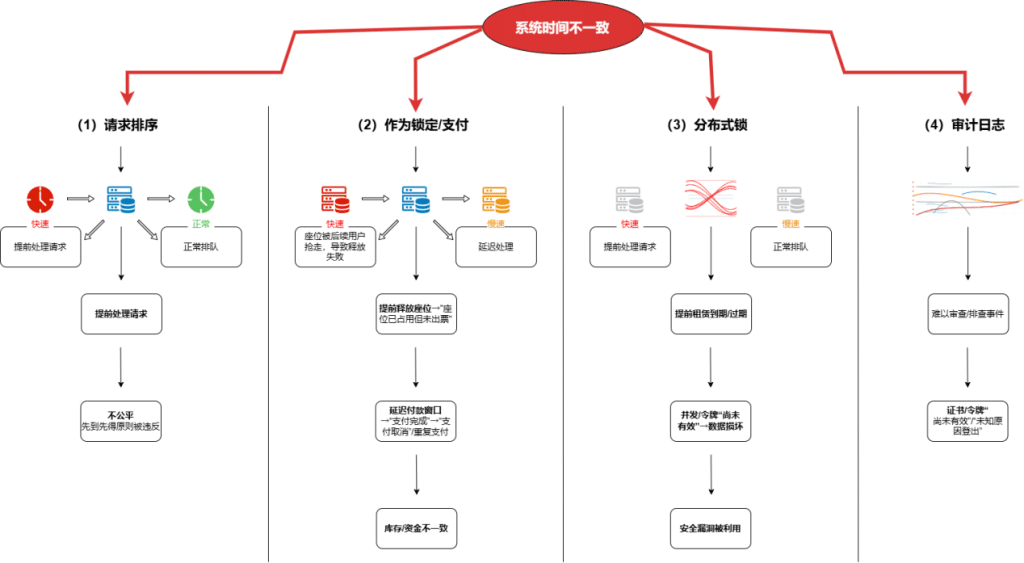

Risks associated with inconsistent timing

GPU/CPU Batch Window Misclassification: Training batch splits and misaligns, leading to throughput degradation

Synchronization barriers triggered early or late: cause multi-device training efficiency to fall off

Streaming Calculation Window Disorder:: Event processing "same batch of data comes twice/misses processing"

Transactions and logs are out of order: scheduler and audit system difficult to review

Reasoning Service Timeout Misclassification: Requests discarded early or returned late

Cross-node task contention for resources:: Inability of the dispatch system to correctly allocate resources according to time budgets

These problems occur more frequently the larger the cluster size and the higher the load.

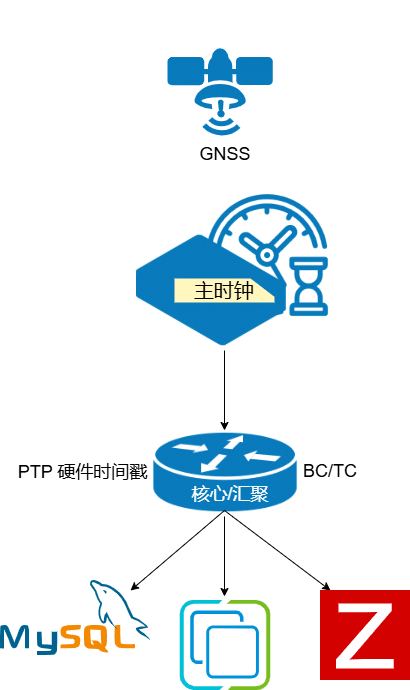

Computing the industry's temporal architecture: aligning and tightening when intranets are self-supplied

Intranet self-provisioning as master mode

1. GNSS (BeiDou/GPS) antenna directly into the machine room

2. Unified time provided by local clock server

3. Avoid public network hijacking and third-party time jitter

Inventory equipment is not retrofitted, NTP is used first to pull it together

In the first phase, NTP is used to "queue up" the full number of servers. No impact on the existing network, no interruption of business

Core compute nodes gradually switch to PTP

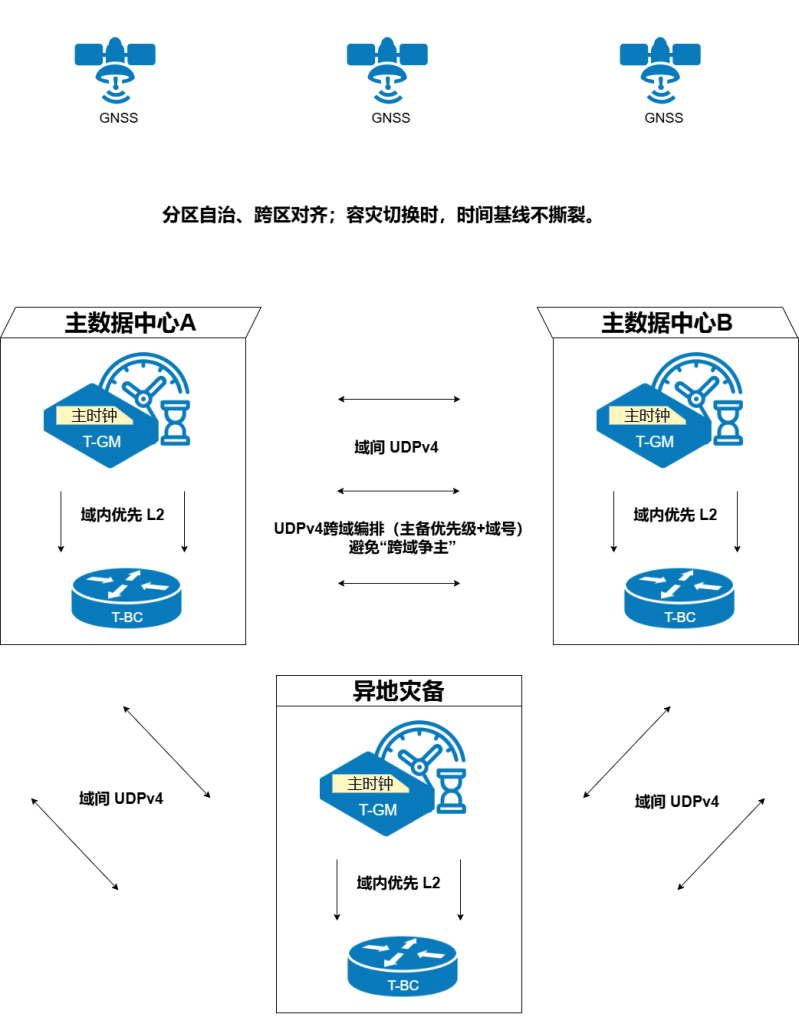

Adoption of G.8275.1 (L2 + SyncE) for the same campus

Adoption of G.8275.2 for cross-campus, cross-tier 3 networks

Configure multi-GM master/standby architecture by domain number/priority

Solutions Overview

GNSS antenna → clock server (OCXO/rubidium) → PTP (L2 + SyncE) distribution to switches/hosts; NTP oriented for stock host compatibility.

Per-location GNSS + local GM, domain synchronization policy and priority switching, off-site disaster recovery via UDPv4 to maintain penetration and consistency.

PTP domains are divided by business/cluster, and training/reasoning/storage are controlled separately to ensure low jitter and nanosecond accuracy possibilities.

Device access to the existing network - three-step landing path

preparatory phase

- Confirm GNSS antenna position, feed, field of view

- Does the switch support PTP hardware timestamping, BC/TC

- Configure VLANs, routing, Bonds, management/service ports

- Security policy only releases timing and remote management ports

opening phase

- Device power-up → Configure time zone → Set hold parameters

- Initiate GNSS acquisition.

- Open NTP to Inventory Hosts

- Enable PTP (L2/SyncE or UDPv4) by Domain

Discharge and Return

- Access a small number of servers first to verify bias/jitter

- And then gradually expand to the whole cluster

Preparing bypass time sources as a business protection solution

Security: taking time-links into your own hands

Clock servers are deployed on the intranet and do not rely on public time on the extranet

Minimize ports, open only timing and O&M interfaces

SNMP uses v3, APIs use Token

All changes fall into the audit log

Uniform time is the strongest forensic baseline, and logs can be cross-checked against each other

Time is not only a performance pedestal, but also a safety pedestal.

O&M: Making the state of time "stand in front of you"

Visualization and monitoring: GNSS lockup, UTC deviation, PTP/NTP process status, deviation/jitter curves, CPU/memory/temperature/oscillator hold state

Alarm Items: GNSS star loss, deviation over threshold, master and backup switchover, timing path change

Frequently Asked Questions (FAQ)

Can public cloud timing replace local clocks?

You can't. What you need is a "uniform and verifiable" time, not "there is a time".

Should the stock servers be changed?

No. NTP pulls together first, and PTP then incrementally raises the key domains.

Why does it have to be PTP?

Because PTP tightens the error from milliseconds to microseconds/nanoseconds, it is a necessary foundation for AI/HPC.

Want to upgrade your data center time accuracy from "working" to "engineering-grade pedestal that can be retested"? Contact us forCustomized assessment and landing solutionsIt includes network adaptation, pilot deployment, monitoring, and O&M delivery.